The Transformative Power of AI and Its Ethical Quandaries

The rise of Artificial Intelligence (AI) technologies has brought about a remarkable transformation in various sectors, ranging from healthcare and finance to manufacturing and transportation. These advancements have enabled machines to perform complex tasks more efficiently than humans, enhancing productivity and operational efficiency. Nevertheless, with great power comes great responsibility. As we embrace these changes, we must confront significant ethical dilemmas that arise, necessitating a deeper understanding of how to navigate this new landscape effectively.

Among the most pressing challenges is job displacement. The increasing automation of processes poses a real threat to traditional employment, as machines are increasingly capable of performing tasks that once required human ingenuity and judgment. For instance, in the automotive industry, robots have transformed assembly lines, leading to increased production rates but also fewer jobs for assembly workers. A report by the McKinsey Global Institute suggests that by 2030, as many as 25 million jobs in the U.S. could be at risk due to automation. This statistic raises critical questions about how society can support those affected by technological job loss. Retraining programs and workforce development initiatives are becoming essential components of discussions surrounding AI implementation.

Another significant ethical concern is bias in algorithms. Many AI systems rely on vast datasets for training, which can inadvertently embed societal biases. For example, facial recognition technology has faced scrutiny for exhibiting racial bias, performing poorly on individuals with darker skin tones. A study noted that on average, darker-skinned women were misidentified at rates as high as 35%, in contrast to less than 1% for lighter-skinned individuals. To combat these biases, it is essential to diversify training datasets and implement fairness checks to ensure AI operates equitably across different populations.

Moreover, the question of accountability looms large in discussions about AI. When an AI system malfunctions or causes harm, determining who bears responsibility—developers, organizations, or the AIs themselves—can be complex. High-profile incidents, like accidents involving autonomous vehicles, have highlighted the need for clear accountability frameworks. Legislation that outlines liability in these scenarios is required to establish trust in AI systems.

In light of these challenges, proactive solutions are emerging. Among these are the establishment of regulatory standards that create a framework for ethical AI deployment across industries. Regulatory bodies in the U.S. are increasingly exploring ways to set guidelines to ensure responsible usage of AI technologies, balancing innovation with public safety.

Transparency is another critical aspect, advocating for AI systems to be understandable and accessible to users. The principle of explainability can foster trust and enable individuals to grasp how decisions are made, which is particularly important in high-stakes areas like healthcare or criminal justice.

Finally, the importance of continuous education cannot be overstated. As the landscape of employment changes, there is a growing need for training programs aimed at equipping the workforce with the skills required to thrive in an AI-driven economy. By investing in educational infrastructure that focuses on technology and ethics, we can prepare future generations to navigate these emerging challenges effectively.

In conclusion, as discussions around ethics in automation gain momentum, it is crucial to explore these challenges and solutions thoroughly. Understanding the multifaceted nature of AI’s impact on society will empower us to harness its benefits while ensuring ethical standards remain at the forefront of technological advancement. Through informed dialogue and collaborative effort, we can navigate the future of AI with both innovation and integrity.

DIVE DEEPER: Click here to learn about ethical challenges in NLP

Addressing Job Displacement and Workforce Transformation

In an era where Artificial Intelligence (AI) is revolutionizing industries, the conversation around job displacement has become increasingly urgent. Automation is reshaping traditional career paths, and the rapid pace of these changes is raising significant concerns about the future of work. The automation of tasks once performed by humans threatens not just low-skilled jobs, but also professional roles in areas like finance, law, and healthcare. As companies seek to optimize efficiency and reduce costs, the potential for millions of workers to be replaced by machines is a reality that cannot be ignored.

A survey conducted by the World Economic Forum predicted that by 2025, the shift towards automation could displace 85 million jobs globally, while also creating 97 million new roles—primarily geared towards human-AI collaboration. This shift presents a paradox: while automation promises increased productivity, it simultaneously threatens to exacerbate income inequality and social unrest. The challenge, then, lies not in whether automation will occur, but in how society navigates the transition to a new labor market.

To address the fallout from automation, a multifaceted approach is required. Here are several proposed solutions that aim to mitigate the negative impact on workers:

- Reskilling and Upskilling Initiatives: Organizations and governments must collaborate to create training programs designed to equip the workforce with new skills that are relevant in an AI-driven economy. Examples include coding bootcamps, digital literacy workshops, and vocational training tailored to emerging job markets.

- Universal Basic Income (UBI): Some propose that governments consider UBI as a possible solution to cushion the financial impact of job loss due to automation. By providing citizens with a guaranteed income, society may reduce the economic pressure on individuals while they transition to new employment opportunities.

- Public-Private Partnerships: Engaging both the public and private sectors in creating transitional programs can help bridge the gap between displaced workers and new job openings. These partnerships can foster innovation and ensure that workforce initiatives are both sustainable and effective.

Meanwhile, the tech industry must confront its responsibilities in this transition. Companies should prioritize transparency and ethical considerations when deploying AI technologies. This includes evaluating the potential societal impact of their innovations and committing to minimize workforce disruption. Establishing partnerships with educational institutions for ongoing workforce development can also help create a more resilient labor market.

As we grapple with the implications of automation, it is essential to engage in meaningful conversations about not only job displacement but also the value of enhancing human capabilities through AI collaboration. Society must recognize that technology will continue to evolve, and with it, the responsibility to ensure ethical frameworks are established to guide its deployment—ensuring that human workers remain at the center of the evolving landscape.

| Challenges | Solutions |

|---|---|

| Bias in AI Algorithms | Implementing fairness assessments and diversity in training datasets. |

| Privacy Concerns | Developing robust data protection regulations and consent frameworks. |

In the fast-evolving realm of Artificial Intelligence (AI), ethical considerations surrounding automation have garnered increased attention. One critical challenge is the inherent bias present in AI algorithms. Implicit biases within datasets can lead to unfair outcomes, perpetuating inequalities in various sectors. As automation takes hold across industries, ensuring fairness is paramount. To tackle this issue, organizations are encouraged to conduct comprehensive fairness assessments and incorporate diversity within their training datasets, which can mitigate bias and improve decision-making processes.Another pressing concern is privacy. As AI systems operationalize vast amounts of data, the risk of privacy invasions grows. To counteract these threats, there is a strong call for the establishment of stringent data protection regulations alongside clearly defined consent frameworks. These solutions not only protect individual rights but also foster trust in AI technologies, enabling a more ethical automation landscape.By addressing these challenges proactively, the future of AI and automation can align more closely with ethical standards, creating a foundation for responsible technological advancement. As the discussion continues, stakeholders, technologists, and ethicists alike must collaborate to navigate these complexities effectively.

DIVE DEEPER: Click here to uncover the ethical challenges

Navigating Ethical Considerations in AI Deployment

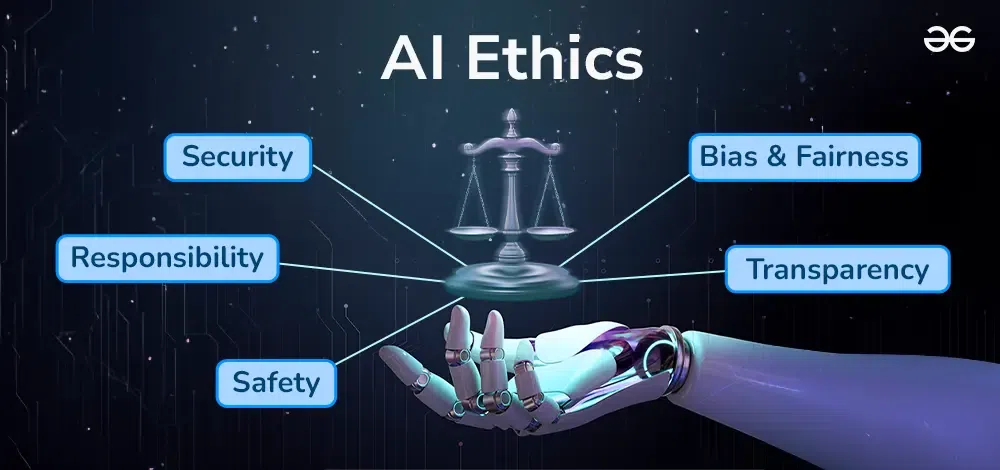

As organizations integrate Artificial Intelligence into their operational frameworks, the ethical implications of AI deployment have emerged as a critical area of concern. The intersecting realms of ethics and technology raise questions about accountability, decision-making, fairness, and transparency in the systems that increasingly govern our lives. The rise of machine learning and AI algorithms, which can make decisions without human intervention, underscores the dire need to establish ethical standards and frameworks that guide their development and application.

One of the primary ethical challenges in AI involves bias and discrimination. Numerous studies have illustrated how AI systems can perpetuate or even exacerbate existing biases present in the training data. For example, in 2018, an investigation found that certain facial recognition systems exhibited significant racial bias, misclassifying individuals with darker skin tones far more often than those with lighter skin. Such disparities not only undermine the credibility of AI technologies but also pose risks of unfair treatment in critical sectors like law enforcement and hiring processes. This calls for ethical oversight to ensure that AI systems are trained on diverse datasets and are audited regularly for biases.

Additionally, transparency in AI decision-making processes is paramount. Often referred to as the “black box” problem, many AI algorithms operate in ways that are not easily interpretable or comprehensible to stakeholders, including the individuals whose lives they may affect. In healthcare, for instance, AI-driven diagnostic tools can recommend treatment plans without providing clear reasoning for their choices. This lack of transparency can lead to mistrust among patients and providers, thereby stifling the potential of AI technologies to enhance patient care.

To counter these challenges, several proposed ethical frameworks have surfaced, focusing on responsible AI deployment. Here are key strategies:

- Establishing Ethical Guidelines: Organizations should develop and adhere to a set of ethical guidelines that govern AI development. This would involve incorporating principles such as fairness, accountability, and transparency into the design and implementation phases, ensuring that AI systems operate without bias and with clear rationale.

- Inclusion of Diverse Perspectives: Engaging a broad range of stakeholders, including ethicists, social scientists, industry leaders, and affected communities, is essential in the AI development process. Their insights can help mitigate biases and ensure that diverse perspectives are considered, fostering AI systems that are inclusive and equitable.

- Regular Auditing and Monitoring: Implementing ongoing evaluations of AI systems can identify biases and inefficiencies throughout their lifecycle. By continuously monitoring algorithms and their outcomes, organizations can proactively address issues and ensure they remain aligned with ethical standards.

The conversation surrounding ethics in AI is not only crucial for ensuring the fair treatment of individuals but also for fostering public trust in these emerging technologies. As AI continues to evolve and permeate various sectors, it is essential for organizations to prioritize ethical considerations in their strategic planning. By doing so, they don’t just improve the reliability and fairness of their systems; they also pave the way for a future where AI serves as a tool for equitable growth, rather than a source of division and mistrust.

As stakeholders navigate these ethical challenges, it becomes increasingly important to recognize the role of industry regulations and guidelines in shaping the discourse around AI ethics. Governments must step up to establish legislative frameworks that protect rights and promote accountability in AI systems, ensuring that the benefits of automation do not come at the cost of societal wellbeing.

DON’T MISS: Click here to discover future trends

Conclusion: Charting a Responsible Path Forward

The intersection of Artificial Intelligence and ethics in automation presents a landscape rich with both opportunities and challenges that demand our immediate attention. As AI technologies continue to evolve, they hold the potential to reshape industries and societal structures alike. However, with this potential comes the pressing need to address the ethical dilemmas that arise from their deployment.

Central to this conversation is the imperative of bias and discrimination in AI systems. Empirical evidence underscores how machine learning algorithms, often trained on skewed datasets, can propagate existing inequalities. Organizations must prioritize the development of ethical frameworks that not only acknowledge these biases but actively work to eliminate them through diverse data sourcing and continuous monitoring.

Additionally, the lack of transparency inherent in many AI systems complicates accountability and public trust. Stakeholders must advocate for systems that clearly communicate their decision-making processes, ensuring that users—from patients in healthcare to job candidates—understand how and why decisions are made. This transparency is critical for fostering trust and acceptance among those who interact with these technologies.

Ultimately, addressing the ethical challenges posed by AI in automation will require a collaborative effort among technologists, ethicists, policymakers, and the public. By embracing inclusivity and ethical guidelines, we can create AI systems that not only enhance efficiency but also contribute positively to societal welfare. The future of AI should not only be about advancement but also about ensuring that these advancements promote equality, fairness, and trust in our automated world. In doing so, we pave the way for a future where AI serves humanity, rather than defining it.